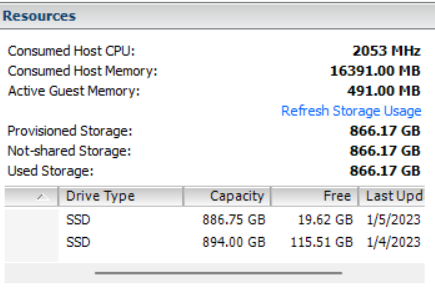

@diana, we purchased a 3.84 TB HDD and dedicated it to the instance, thus I think space is taken care of, my problem is the issues of app crashing persists, and since yesterday, haven’t managed to have it running, we are currently at around 50 K records, target 300 K records, reason for a permanent fix.

[info] 2023-01-10T08:22:39.442823Z couchdb@127.0.0.1 <0.9.0> -------- Application khash started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:39.450312Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_event started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:39.450539Z couchdb@127.0.0.1 <0.9.0> -------- Application hyper started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:39.457085Z couchdb@127.0.0.1 <0.9.0> -------- Application ibrowse started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:39.463119Z couchdb@127.0.0.1 <0.9.0> -------- Application ioq started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:39.463321Z couchdb@127.0.0.1 <0.9.0> -------- Application mochiweb started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:39.472482Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB 2.3.1 is starting.

[info] 2023-01-10T08:22:39.472570Z couchdb@127.0.0.1 <0.213.0> -------- Starting couch_sup

[notice] 2023-01-10T08:22:39.482610Z couchdb@127.0.0.1 <0.96.0> -------- config: [features] pluggable-storage-engines set to true for reason nil

[info] 2023-01-10T08:22:39.590670Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started. Time to relax.

[info] 2023-01-10T08:22:39.590895Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started on http://0.0.0.0:5987/

[info] 2023-01-10T08:22:39.591163Z couchdb@127.0.0.1 <0.9.0> -------- Application couch started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:39.591242Z couchdb@127.0.0.1 <0.9.0> -------- Application ets_lru started on node ‘couchdb@127.0.0.1’

[error] 2023-01-10T08:22:39.621402Z couchdb@127.0.0.1 <0.260.0> -------- Could not get design docs for <<“shards/80000000-9fffffff/medic-user-anastasia_okello-meta.1669742095”>> error:{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[error] 2023-01-10T08:22:39.621496Z couchdb@127.0.0.1 emulator -------- Error in process <0.272.0> on node ‘couchdb@127.0.0.1’ with exit value:

{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[notice] 2023-01-10T08:22:39.624499Z couchdb@127.0.0.1 <0.279.0> -------- rexi_server : started servers

[notice] 2023-01-10T08:22:39.628541Z couchdb@127.0.0.1 <0.283.0> -------- rexi_buffer : started servers

[info] 2023-01-10T08:22:39.628795Z couchdb@127.0.0.1 <0.9.0> -------- Application rexi started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:39.669903Z couchdb@127.0.0.1 <0.9.0> -------- Application mem3 started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:39.670279Z couchdb@127.0.0.1 <0.9.0> -------- Application fabric[2023-01-10 08:22:41] {“Kernel pid terminated”,application_controller,“{application_terminated,couch_log,shutdown}”}

Crash dump is being written to: erl_crash.dump…[info] 2023-01-10T08:22:43.966931Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_log started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:43.972637Z couchdb@127.0.0.1 <0.9.0> -------- Application folsom started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.020758Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_stats started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.020994Z couchdb@127.0.0.1 <0.9.0> -------- Application khash started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.029092Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_event started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.029320Z couchdb@127.0.0.1 <0.9.0> -------- Application hyper started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.036195Z couchdb@127.0.0.1 <0.9.0> -------- Application ibrowse started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.042446Z couchdb@127.0.0.1 <0.9.0> -------- Application ioq started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.042646Z couchdb@127.0.0.1 <0.9.0> -------- Application mochiweb started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.052563Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB 2.3.1 is starting.

[info] 2023-01-10T08:22:44.052653Z couchdb@127.0.0.1 <0.213.0> -------- Starting couch_sup

[notice] 2023-01-10T08:22:44.063314Z couchdb@127.0.0.1 <0.96.0> -------- config: [features] pluggable-storage-engines set to true for reason nil

[info] 2023-01-10T08:22:44.172059Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started. Time to relax.

[info] 2023-01-10T08:22:44.172320Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started on http://0.0.0.0:5987/

[info] 2023-01-10T08:22:44.172670Z couchdb@127.0.0.1 <0.9.0> -------- Application couch started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.173048Z couchdb@127.0.0.1 <0.9.0> -------- Application ets_lru started on node ‘couchdb@127.0.0.1’

[error] 2023-01-10T08:22:44.200255Z couchdb@127.0.0.1 <0.260.0> -------- Could not get design docs for <<“shards/80000000-9fffffff/medic-user-anastasia_okello-meta.1669742095”>> error:{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[error] 2023-01-10T08:22:44.200474Z couchdb@127.0.0.1 emulator -------- Error in process <0.272.0> on node ‘couchdb@127.0.0.1’ with exit value:

{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[notice] 2023-01-10T08:22:44.203548Z couchdb@127.0.0.1 <0.279.0> -------- rexi_server : started servers

[notice] 2023-01-10T08:22:44.206526Z couchdb@127.0.0.1 <0.283.0> -------- rexi_buffer : started servers

[info] 2023-01-10T08:22:44.206809Z couchdb@127.0.0.1 <0.9.0> -------- Application rexi started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.235828Z couchdb@127.0.0.1 <0.9.0> -------- Application mem3 started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:44.235902Z couchdb@127.0.0.1 <0.9.0> -------- Application fabric[2023-01-10 08:22:45] {“Kernel pid terminated”,application_controller,“{application_terminated,couch_log,shutdown}”}

Crash dump is being written to: erl_crash.dump…[info] 2023-01-10T08:22:48.631919Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_log started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.637156Z couchdb@127.0.0.1 <0.9.0> -------- Application folsom started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.683888Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_stats started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.684123Z couchdb@127.0.0.1 <0.9.0> -------- Application khash started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.691647Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_event started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.691869Z couchdb@127.0.0.1 <0.9.0> -------- Application hyper started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.698596Z couchdb@127.0.0.1 <0.9.0> -------- Application ibrowse started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.704658Z couchdb@127.0.0.1 <0.9.0> -------- Application ioq started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.704856Z couchdb@127.0.0.1 <0.9.0> -------- Application mochiweb started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.713968Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB 2.3.1 is starting.

[info] 2023-01-10T08:22:48.714057Z couchdb@127.0.0.1 <0.213.0> -------- Starting couch_sup

[notice] 2023-01-10T08:22:48.724377Z couchdb@127.0.0.1 <0.96.0> -------- config: [features] pluggable-storage-engines set to true for reason nil

[info] 2023-01-10T08:22:48.832903Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started. Time to relax.

[info] 2023-01-10T08:22:48.833023Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started on http://0.0.0.0:5987/

[info] 2023-01-10T08:22:48.833345Z couchdb@127.0.0.1 <0.9.0> -------- Application couch started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.833437Z couchdb@127.0.0.1 <0.9.0> -------- Application ets_lru started on node ‘couchdb@127.0.0.1’

[error] 2023-01-10T08:22:48.870201Z couchdb@127.0.0.1 emulator -------- Error in process <0.268.0> on node ‘couchdb@127.0.0.1’ with exit value:

{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]

[error] 2023-01-10T08:22:48.870250Z couchdb@127.0.0.1 <0.260.0> -------- Could not get design docs for <<“shards/80000000-9fffffff/medic-user-anastasia_okello-meta.1669742095”>> error:{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[notice] 2023-01-10T08:22:48.877790Z couchdb@127.0.0.1 <0.279.0> -------- rexi_server : started servers

[notice] 2023-01-10T08:22:48.882161Z couchdb@127.0.0.1 <0.283.0> -------- rexi_buffer : started servers

[info] 2023-01-10T08:22:48.882463Z couchdb@127.0.0.1 <0.9.0> -------- Application rexi started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.926548Z couchdb@127.0.0.1 <0.9.0> -------- Application mem3 started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:48.926846Z couchdb@127.0.0.1 <0.9.0> -------- Application fabric[2023-01-10 08:22:50] {“Kernel pid terminated”,application_controller,“{application_terminated,couch_log,shutdown}”}

Crash dump is being written to: erl_crash.dump…[info] 2023-01-10T08:22:53.251555Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_log started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.257189Z couchdb@127.0.0.1 <0.9.0> -------- Application folsom started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.304658Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_stats started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.304894Z couchdb@127.0.0.1 <0.9.0> -------- Application khash started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.312714Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_event started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.312943Z couchdb@127.0.0.1 <0.9.0> -------- Application hyper started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.319791Z couchdb@127.0.0.1 <0.9.0> -------- Application ibrowse started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.326112Z couchdb@127.0.0.1 <0.9.0> -------- Application ioq started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.326309Z couchdb@127.0.0.1 <0.9.0> -------- Application mochiweb started on node ‘couchdb@127.0.0.1’

[info] 2023-1-10T08:22:53.336257Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB 2.3.1 is starting.

[info] 2023-01-10T08:22:53.336347Z couchdb@127.0.0.1 <0.213.0> -------- Starting couch_sup

[notice] 2023-01-10T08:22:53.346781Z couchdb@127.0.0.1 <0.96.0> -------- config: [features] pluggable-storage-engines set to true for reason nil

[info] 2023-01-10T08:22:53.454718Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started. Time to relax.

[info] 2023-01-10T08:22:53.454839Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started on http://0.0.0.0:5987/

[info] 2023-01-10T08:22:53.455133Z couchdb@127.0.0.1 <0.9.0> -------- Application couch started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.455339Z couchdb@127.0.0.1 <0.9.0> -------- Application ets_lru started on node ‘couchdb@127.0.0.1’

[notice] 2023-01-10T08:22:53.485512Z couchdb@127.0.0.1 <0.279.0> -------- rexi_server : started servers

[error] 2023-01-10T08:22:53.487455Z couchdb@127.0.0.1 <0.260.0> -------- Could not get design docs for <<“shards/80000000-9fffffff/medic-user-anastasia_okello-meta.1669742095”>> error:{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[error] 2023-01-10T08:22:53.487531Z couchdb@127.0.0.1 emulator -------- Error in process <0.272.0> on node ‘couchdb@127.0.0.1’ with exit value:

{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[notice] 2023-01-10T08:22:53.491099Z couchdb@127.0.0.1 <0.283.0> -------- rexi_buffer : started servers

[info] 2023-01-10T08:22:53.491305Z couchdb@127.0.0.1 <0.9.0> -------- Application rexi started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.517273Z couchdb@127.0.0.1 <0.9.0> -------- Application mem3 started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:53.517385Z couchdb@127.0.0.1 <0.9.0> -------- Application fabric[2023-01-10 08:22:55] {“Kernel pid terminated”,application_controller,“{application_terminated,couch_log,shutdown}”}

Crash dump is being written to: erl_crash.dump…[info] 2023-01-10T08:22:57.806559Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_log started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:57.811803Z couchdb@127.0.0.1 <0.9.0> -------- Application folsom started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:57.857594Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_stats started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:57.857829Z couchdb@127.0.0.1 <0.9.0> -------- Application khash started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:57.865316Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_event started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:57.865541Z couchdb@127.0.0.1 <0.9.0> -------- Application hyper started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:57.872251Z couchdb@127.0.0.1 <0.9.0> -------- Application ibrowse started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:57.878281Z couchdb@127.0.0.1 <0.9.0> -------- Application ioq started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:57.878485Z couchdb@127.0.0.1 <0.9.0> -------- Application mochiweb started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:57.887926Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB 2.3.1 is starting.

[info] 2023-01-10T08:22:57.888016Z couchdb@127.0.0.1 <0.213.0> -------- Starting couch_sup

[notice] 2023-01-10T08:22:57.902276Z couchdb@127.0.0.1 <0.96.0> -------- config: [features] pluggable-storage-engines set to true for reason nil

[info] 2023-01-10T08:22:58.071128Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started. Time to relax.

[info] 2023-01-10T08:22:58.071282Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started on http://0.0.0.0:5987/

[info] 2023-01-10T08:22:58.071617Z couchdb@127.0.0.1 <0.9.0> -------- Application couch started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:58.071979Z couchdb@127.0.0.1 <0.9.0> -------- Application ets_lru started on node ‘couchdb@127.0.0.1’

[error] 2023-01-10T08:22:58.113103Z couchdb@127.0.0.1 <0.260.0> -------- Could not get design docs for <<“shards/80000000-9fffffff/medic-user-anastasia_okello-meta.1669742095”>> error:{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[error] 2023-01-10T08:22:58.113130Z couchdb@127.0.0.1 emulator -------- Error in process <0.272.0> on node ‘couchdb@127.0.0.1’ with exit value:

{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[notice] 2023-01-10T08:22:58.117928Z couchdb@127.0.0.1 <0.279.0> -------- rexi_server : started servers

[notice] 2023-01-10T08:22:58.122052Z couchdb@127.0.0.1 <0.283.0> -------- rexi_buffer : started servers

[info] 2023-01-10T08:22:58.122339Z couchdb@127.0.0.1 <0.9.0> -------- Application rexi started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:58.163477Z couchdb@127.0.0.1 <0.9.0> -------- Application mem3 started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:22:58.163636Z couchdb@127.0.0.1 <0.9.0> -------- Application fabric[2023-01-10 08:22:59] {“Kernel pid terminated”,application_controller,“{application_terminated,couch_log,shutdown}”}

Crash dump is being written to: erl_crash.dump…[info] 2023-01-10T08:23:02.624519Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_log started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.631611Z couchdb@127.0.0.1 <0.9.0> -------- Application folsom started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.680323Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_stats started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.680572Z couchdb@127.0.0.1 <0.9.0> -------- Application khash started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.689232Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_event started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.689467Z couchdb@127.0.0.1 <0.9.0> -------- Application hyper started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.696694Z couchdb@127.0.0.1 <0.9.0> -------- Application ibrowse started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.703155Z couchdb@127.0.0.1 <0.9.0> -------- Application ioq started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.703354Z couchdb@127.0.0.1 <0.9.0> -------- Application mochiweb started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.712945Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB 2.3.1 is starting.

[info] 2023-01-10T08:23:02.713036Z couchdb@127.0.0.1 <0.213.0> -------- Starting couch_sup

[notice] 2023-01-10T08:23:02.724053Z couchdb@127.0.0.1 <0.96.0> -------- config: [features] pluggable-storage-engines set to true for reason nil

[info] 2023-01-10T08:23:02.842198Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started. Time to relax.

[info] 2023-01-10T08:23:02.842380Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started on http://0.0.0.0:5987/

[info] 2023-01-10T08:23:02.842611Z couchdb@127.0.0.1 <0.9.0> -------- Application couch started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.842858Z couchdb@127.0.0.1 <0.9.0> -------- Application ets_lru started on node ‘couchdb@127.0.0.1’

[error] 2023-01-10T08:23:02.871774Z couchdb@127.0.0.1 <0.260.0> -------- Could not get design docs for <<“shards/80000000-9fffffff/medic-user-anastasia_okello-meta.1669742095”>> error:{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[error] 2023-01-10T08:23:02.871911Z couchdb@127.0.0.1 emulator -------- Error in process <0.268.0> on node ‘couchdb@127.0.0.1’ with exit value:

{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[notice] 2023-01-10T08:23:02.877944Z couchdb@127.0.0.1 <0.279.0> -------- rexi_server : started servers

[notice] 2023-01-10T08:23:02.882269Z couchdb@127.0.0.1 <0.283.0> -------- rexi_buffer : started servers

[info] 2023-01-10T08:23:02.882584Z couchdb@127.0.0.1 <0.9.0> -------- Application rexi started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.928360Z couchdb@127.0.0.1 <0.9.0> -------- Application mem3 started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:02.928532Z couchdb@127.0.0.1 <0.9.0> -------- Application fabric[2023-01-10 08:23:04] {“Kernel pid terminated”,application_controller,“{application_terminated,couch_log,shutdown}”}

Crash dump is being written to: erl_crash.dump…[info] 2023-01-10T08:23:07.238969Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_log started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.244588Z couchdb@127.0.0.1 <0.9.0> -------- Application folsom started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.291025Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_stats started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.291260Z couchdb@127.0.0.1 <0.9.0> -------- Application khash started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.298939Z couchdb@127.0.0.1 <0.9.0> -------- Application couch_event started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.299170Z couchdb@127.0.0.1 <0.9.0> -------- Application hyper started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.305703Z couchdb@127.0.0.1 <0.9.0> -------- Application ibrowse started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.311847Z couchdb@127.0.0.1 <0.9.0> -------- Application ioq started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.312056Z couchdb@127.0.0.1 <0.9.0> -------- Application mochiweb started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.321546Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB 2.3.1 is starting.

[info] 2023-01-10T08:23:07.321633Z couchdb@127.0.0.1 <0.213.0> -------- Starting couch_sup

[notice] 2023-01-10T08:23:07.331425Z couchdb@127.0.0.1 <0.96.0> -------- config: [features] pluggable-storage-engines set to true for reason nil

[info] 2023-01-10T08:23:07.434147Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started. Time to relax.

[info] 2023-01-10T08:23:07.434390Z couchdb@127.0.0.1 <0.212.0> -------- Apache CouchDB has started on http://0.0.0.0:5987/

[info] 2023-01-10T08:23:07.434803Z couchdb@127.0.0.1 <0.9.0> -------- Application couch started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.435240Z couchdb@127.0.0.1 <0.9.0> -------- Application ets_lru started on node ‘couchdb@127.0.0.1’

[error] 2023-01-10T08:23:07.466789Z couchdb@127.0.0.1 <0.260.0> -------- Could not get design docs for <<“shards/80000000-9fffffff/medic-user-anastasia_okello-meta.1669742095”>> error:{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[error] 2023-01-10T08:23:07.466982Z couchdb@127.0.0.1 emulator -------- Error in process <0.272.0> on node ‘couchdb@127.0.0.1’ with exit value:

{badarg,[{ets,member,[mem3_openers,<<“medic-user-anastasia_okello-meta”>>],[]},{mem3_shards,maybe_spawn_shard_writer,3,[{file,“src/mem3_shards.erl”},{line,476}]},{mem3_shards,load_shards_from_db,2,[{file,“src/mem3_shards.erl”},{line,381}]},{mem3_shards,load_shards_from_disk,1,[{file,“src/mem3_shards.erl”},{line,370}]},{mem3_shards,for_db,2,[{file,“src/mem3_shards.erl”},{line,59}]},{fabric_view_all_docs,go,5,[{file,“src/fabric_view_all_docs.erl”},{line,24}]},{couch_db,‘-get_design_docs/1-fun-0-’,1,[{file,“src/couch_db.erl”},{line,627}]}]}

[notice] 2023-01-10T08:23:07.470050Z couchdb@127.0.0.1 <0.279.0> -------- rexi_server : started servers

[notice] 2023-01-10T08:23:07.473052Z couchdb@127.0.0.1 <0.283.0> -------- rexi_buffer : started servers

[info] 2023-01-10T08:23:07.473337Z couchdb@127.0.0.1 <0.9.0> -------- Application rexi started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.502494Z couchdb@127.0.0.1 <0.9.0> -------- Application mem3 started on node ‘couchdb@127.0.0.1’

[info] 2023-01-10T08:23:07.502702Z couchdb@127.0.0.1 <0.9.0> -------- Application fabric[2023-01-10 08:23:09] {“Kernel pid terminated”,application_controller,“{application_terminated,couch_log,shutdown}”}