27 May 2025 Call

Attending

Notes

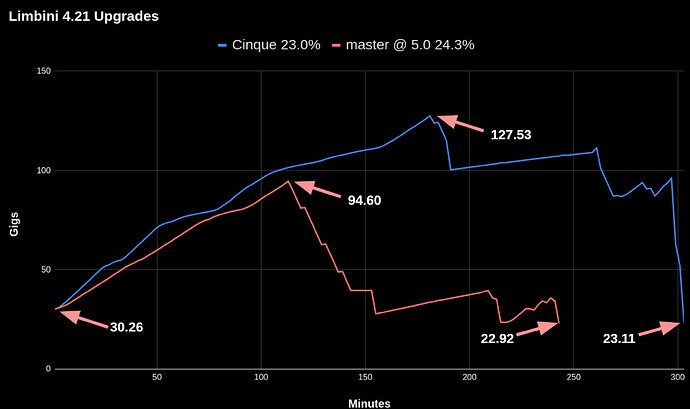

- General discussion about “upgrades take a lot of space” - very likely Hosting TCO Squad 2.0 focus

- why does CHT upgrading (adding new DDocs) cause more than 100% increase disk space?

- view changes are what cause a large increase in disk space, which do not happen every upgrade

- @jkuester to file POC ticket in CHT Coreto show how disk space goes up on a generic couch instance with view re-indexing. Discuss w/ @diana and @twier . We’ll then take this over to Couch Slack channel for questions.

-

- Review main ticket

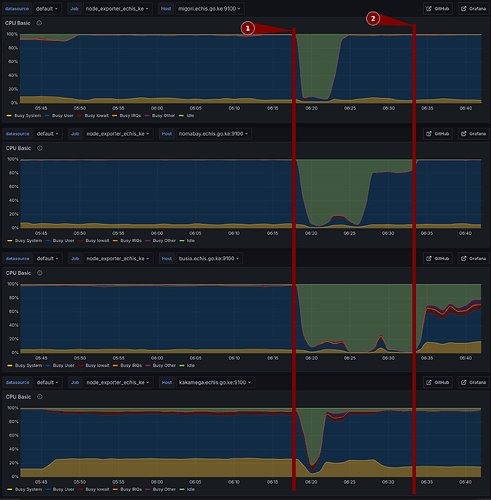

- @elijah - looking to have VMs to test eCHIS KE upgrades clones of their production from 4.11 to nouveau@master

- @mrjones - confirm how extra disk space use when upgrading 4.19/4.18 → nouveau@master